Suggestion: "Output Files", "Winsteps control file" is a convenient way of producing a rectangular picture of your judging plan from your data.

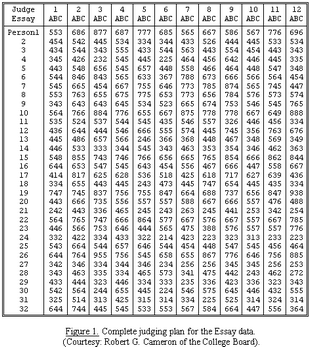

The only requirement on the judging plan is that there be enough linkage between all elements of all facets that all parameters can be estimated without indeterminacy within one frame of reference. Fig 1 illustrates an ideal judging plan for both conventional and Rasch analysis. The 1152 ratings shown are a set of essay ratings from the Advanced Placement Program of the College Board. These are also discussed in Braun (1988). This judging plan meets the linkage requirement because every element can be compared directly and unambiguously with every other element. Thus it provides precise and accurate measures of all parameters in a shared frame of reference. For robust estimation of measures, we need 30 observations of each element, and at least 10 observations of each rating-scale category.

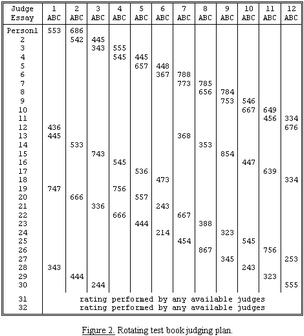

Less data intensive, but also less precise, Rasch estimates can be obtained so long as overlap is maintained. Fig. 2 illustrates such a reduced network of observations which still connects examinees, judges and items. The parameters are linked into one frame of reference through 180 ratings which share pairs of parameters (common essays, common examinees or common judges). Accidental omissions or unintended ratings would alter the judging plan, but would not threaten the analysis. Measures are less precise than with complete data because 83% less observations are made.

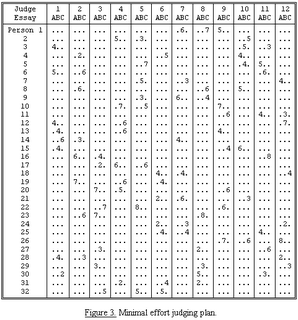

Judging is time-consuming and expensive. Under extreme circumstances, judging plans can be devised so that each performance is judged only once. Even then the statistical requirement for overlap can usually be met rather easily. Fig. 3 is a simulation of such a minimal judging plan. Each of the 32 examinees' three essays is rated by only one judge. Each of the 12 judges rates 8 essays, including 2 or 3 of each essay type. Nevertheless the examinee-judge-essay overlap of these 96 ratings enables all parameters to be estimated unambiguously in one frame of reference. The constraints used in the assignment of essays to judges were that (1) each essay be rated only once; (2) each judge rate an examinee once at most; and (3) each judge avoid rating any one type of essay too frequently. The statistical cost of this minimal data collection is low measurement precision, but this plan requires only 96 ratings, 8% of the data in fig. 1. A practical refinement of this minimal plan would allow each judge to work at his own pace until all essays were graded, so that faster judges would rate more essays. A minimal judging plan of this type has been successfully implemented (Lunz et al., 1990).

See also "Does Sparseness Matter? Examining the Use of Generalizability Theory and Many-Facet Rasch Measurement in Sparse Rating Designs", S.A. Wind et al., https://doi.org/10.1177/01466216231182148

In the Minimal Plan, Person 3 and 6 are connected by Rater 1 and Essay A. This starts a chain of connections which connects all the elements.

Title = "Minimal Judging Plan"

Facets = 3

Models = ?,?,?,R9

Labels =

1, Persons

1-32

*

2, Raters

1-12

*

3, Essays

1=A

2=B

3=C

*

data=

;Person Rater Essay Rating

3 1 1 4

6 1 1 5

12 1 1 4

13 1 2 4

15 1 2 4

28 1 2 4

14 1 3 6

30 1 3 2

16 2 1 6

19 2 1 7

4 2 2 2

8 2 2 6

14 2 2 3

6 2 3 6

23 2 3 6

28 2 3 3

20 3 1 7

23 3 1 7

29 3 1 3

16 3 2 4

17 3 2 2

27 3 2 3

22 3 3 7

32 3 3 5

2 4 1 5

17 4 1 6

10 4 2 7

20 4 2 5

31 4 2 2

12 4 3 6

13 4 3 6

19 4 3 6

22 5 1 8

32 5 1 5

2 5 2 3

7 5 2 5

9 5 2 3

5 5 3 7

10 5 3 5

17 5 3 6

18 6 1 4

21 6 1 2

24 6 1 2

19 6 2 4

25 6 2 4

32 6 2 5

4 6 3 5

31 6 3 4

9 7 1 6

14 7 1 4

1 7 2 6

18 7 2 4

21 7 2 6

7 7 3 3

24 7 3 3

25 7 3 4

27 8 1 2

30 8 1 5

31 8 1 2

23 8 2 8

29 8 2 3

1 8 3 7

8 8 3 6

9 8 3 4

1 9 1 5

10 9 1 7

13 9 1 4

22 9 2 6

26 9 2 7

11 9 3 6

15 9 3 4

20 9 3 6

4 10 1 4

8 10 1 5

15 10 1 6

3 10 2 5

5 10 2 4

2 10 3 5

21 10 3 3

26 10 3 6

5 11 1 5

11 11 1 4

25 11 1 4

6 11 2 6

30 11 2 3

3 11 3 3

16 11 3 8

27 11 3 6

7 12 1 4

26 12 1 8

28 12 1 2

11 12 2 3

12 12 2 7

24 12 2 2

18 12 3 4

29 12 3 3

; end of minimal data