When USCALE=1 (or USCALE= is omitted), measures are reported in logits. When USCALE=0.59, measures are reported in approximated probits.

Logit: A logit (log-odds unit, pronounced "low-jit") is a unit of additive measurement which is well-defined within the context of a single homogeneous test. When logit measures are compared between tests, their probabilistic meaning is maintained but their substantive meanings may differ. This is often the case when two tests of the same construct contain items of different types. Consequently, logit measures underlying different tests must be equated before the measures can be meaningfully compared. This situation is parallel to that in Physics when some temperatures are measured in degrees Fahrenheit, some in Celsius, and others in Kelvin.

As a first step in the equating process, plot the pairs of measures obtained for the same elements (e.g., persons) from the two tests. You can use this plot to make a quick estimate of the nature of the relationship between the two logit measurement frameworks. If the relationship is not close to linear, the two tests may not be measuring the same thing.

Logarithms: In Rasch measurement all logarithms, "log", are "natural" or "Napierian", sometime abbreviated elsewhere as "ln". "Logarithms to the base 10" are written log10. Logits to the base 10 are called "lods". 1 Lod = 0.4343 * 1 Logit. 1 Logit = 2.3026 * 1 Lod.

Logit-to-Probability Conversion Table

Logit difference between ability measure and item calibration and corresponding probability of success on a dichotomous item is shown in the table. A rough approximation between -2 and +2 logits is:

Probability% = (logit ability - logit difficulty) * 20 + 50

Logit 5.0 4.6 4.0 3.0 2.2 2.0 1.4 1.1 1.0 0.8 0.69 0.5 0.4 0.2 0.1 0.0 |

Probability 99% 99% 98% 95% 90% 88% 80% 75% 73% 70% 67% 62% 60% 55% 52% 50% |

Probit 2.48 2.33 2.10 1.67 1.28 1.18 0.85 0.67 0.62 0.50 0.43 0.31 0.25 0.13 0.06 0.00 |

|

Logit -5.0 -4.6 -4.0 -3.0 -2.2 -2.0 -1.4 -1.1 -1.0 -0.8 -0.69 -0.5 -0.4 -0.2 -0.1 -0.0 |

Probability 1% 1% 2% 5% 10% 12% 20% 25% 27% 30% 33% 38% 40% 45% 48% 50% |

Probit -2.48 -2.33 -2.10 -1.67 -1.28 -1.18 -0.85 -0.67 -0.62 -0.50 -0.43 -0.31 -0.25 -0.13 -0.06 -0.00 |

Example with dichotomous data:

In Table 1, it is the distance between each person and each item which determines the probability.

MEASURE | MEASURE

<more> ------------------ PERSONS -+- ITEMS -------------------- <rare>

5.0 + 5.0

|

|

| XXX (items difficult for persons) <- 4.5 logits

|

|

4.0 + 4.0

|

XX | <- 3.7 logits

|

|

|

3.0 + 3.0

The two persons are at 3.7 logits. The three items are at 4.5 logits. The difference is 3.7 - 4.5 = -0.8 logits. From the logit table above, this is predicted as 30% probability of success for persons like these on items like these. 30% probability of success on a 0-1 item means that the expected score on the item is 30%/100% score-points = 0.3 score-points.

Inference with Logits

Logit distances such as 1.4 logits are applicable to individual dichotomous items. 1.4 logits is the distance between 50% success and 80% success on a dichotomous items.

Logit distances also describe the relative performance on adjacent categories of a rating scale, e.g, if in a Likert Scale, "Agree" and "Strongly Agree" are equally likely to be observed at a point on the latent variable, then 1.4 logits higher, "Strongly Agree" is likely to be observed 8 times, for every 2 times that "Agree" is observed.

For sets of dichotomous items, or performance on a rating scale item considered as a whole, the direct interpretation of logits no longer applies. The mathematics of a probabilistic interpretation under these circumstances is complex and rarely worth the effort to perform. Under these conditions, logits are usually only of mathematical value for the computation of fit statistics - if you wish to compute your own.

Different tests usually have different probabilistic structures, so that interpretation of logits across tests are not the same as interpretation of logits within tests. This is why test equating is necessary.

Logits and Probits

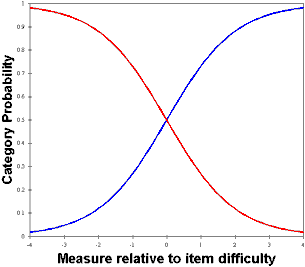

Logits are the "natural" unit for the logistic ogive. Probits are the "natural" units for the unit normal cumulative distribution function, the "normal" ogive. Many statisticians are more familiar with the normal ogive, and prefer to work in probits. The normal ogive and the logistic ogive are similar, and a conversion of 1.7 approximately aligns them.

When the measurement units are probits, the dichotomous Rasch model is written:

log ( P / (1-P) ) = 1.7 * ( B -D )

To have the measures reported in probits, set USCALE = 0.59 = 1/1.7 = 0.588

But if the desired probit-unit is based on the empirical standard deviation, then set USCALE = 1 / (logit S.D.)

Some History

Around 1940, researchers focused on the "normal ogive model". This was an IRT model, computed on the basis that the person sample has a unit normal distribution N(0,1).

The "normal ogive" model is: Probit (P) = theta - Di

where theta is a distribution, not an individual person.

But the normal ogive is difficult to compute. So they approximated the normal ogive (in probit units) with the much simpler-to-compute logistic ogive (in logit units). The approximate relationship is: logit = 1.7 probit.

IRT philosophy is still based on the N(0,1) sample distribution, and so a 1-PL IRT model is:

log(P/(1-P)) = 1.7 (theta - Di)

where theta represents a sample distribution. Di is the "one parameter".

The Rasch model takes a different approach. It does not assume any particular sample or item distribution. It uses the logistic ogive because of its mathematical properties, not because of its similarity to the cumulative normal ogive.

The Rasch model parameterizes each person individually, Bn. As a reference point it does not use the person mean (norm referencing). Instead it conventionally uses the item mean (criterion referencing). In the Rasch model there is no imputation of a normal distribution to the sample, so probits are not considered.

The Rasch model is: log(P/(1-P)) = Bn - Di

Much IRT literature asserts that "1-PL model = Rasch model". This is misleading. The mathematical equations can look similar, but their motivation is entirely different.

If you want to approximate the "normal ogive IRT model" with Rasch software, then

(a) adjust the person measures so the person mean = 0: UPMEAN=0

(b) adjust the user-scaling: probits = logits/1.7: USCALE=0.59

After this, the sample may come close to having an N(0,1) sample distribution - but not usually! So you can force S.D. = 1 unit, by setting USCALE = 1 / person S.D.